Apr 16, 2024 by Neil Strange

Empowering data mesh: The tools to deliver BI excellence

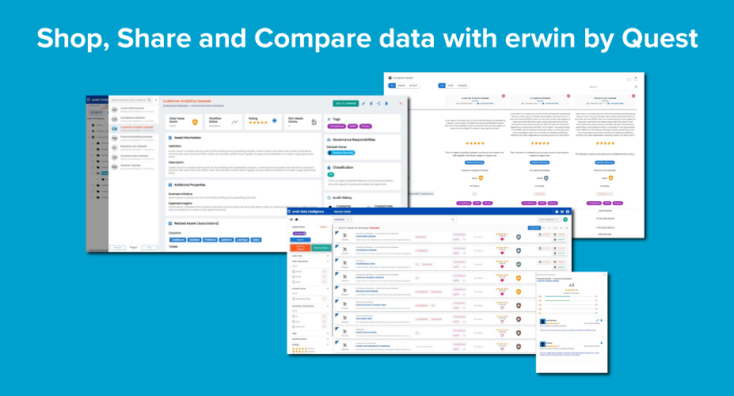

The data mesh framework In the dynamic landscape of data management, the search for agility, scalability, and efficiency has led organizations to explore new, innovative approaches. One such innovation gaining traction is the data mesh framework. The data mesh approach distributes data ownership and decentralizes data architecture, paving the way for enhanced agility and scalability. […]